Podcast: Brian Mushonga and Will Thrower

I spoke with Brian Mushonga and Will Thrower, two members of the three-person team at Caledon Investment Partners, about a recent memo they published titled ‘Hyperscaler Capex is Hitting Physical Limits’. This is the fourth time I’ve had Brian and Will on the podcast, and the memo was a great excuse to catch up on their thinking and to hear where they’re spending time.

Their core argument is that the biggest constraint on the AI buildout is no longer software or even chips alone, but energy infrastructure. As demand for compute explodes, hyperscalers are running into hard bottlenecks in power generation, grid capacity, transformers, turbines, cooling systems, permitting, and skilled labour. Brian and Will explain why these constraints may prove more durable than many investors expect, and why the picks-and-shovels businesses exposed to them could continue to benefit even if the market starts to worry about cyclicality or overinvestment.

We also discuss whether computing has entered a more asset-intensive era, how sustainable the current capex cycle really is, and what the strongest bear arguments against the AI boom get right and wrong. Along the way, we explore why Caledon prefers owning the bottlenecks around the hyperscalers rather than the hyperscalers themselves, how China’s electrification journey compares with America’s, and why efficiency gains in AI may increase demand rather than reduce it. The conversation concludes with a look at Caledon’s research process, which is built around mapping value chains end-to-end to identify the scarce assets that matter most.

As always, this conversation is for general discussion only, not investment advice.

You can read the transcript below or listen to our conversation on Apple Podcasts (link), Spotify (link) or wherever you get your podcasts.

This transcript has been lightly edited for clarity, grammar and punctuation.

Graham Rhodes: This is Graham Rhodes, and you're listening to the Long River Podcast, where I share conversations with my peers on global business and investing as we see it from Asia. My guests today are Brian Mushonga and Will Thrower, two of the three-person team at Caledon Investment Partners.

Caledon invests in the energy transition and electrification, often through the picks-and-shovels businesses that benefit when power, equipment, and infrastructure are tight. This is the fourth time I've had Brian and Will on the podcast, which tells you how much I enjoy learning from them.

Brian is from Zimbabwe and now lives and works in Johannesburg. He has worked in insurance as well as on the sell side, and now on the buy side.

Will is American, and after university, he moved to China, where he transitioned from business into investing, driven by his love of hunting for cheap stocks around the world.

Brian, Will, welcome back to the podcast.

Will Thrower: Thanks for having us.

Brian Mushonga: Yeah, thanks, Graham. It's good to be back.

Before I go any further, we have to give this quick reminder. Today's conversation is for general information and discussion only. It does not constitute investment advice, a recommendation, or an offer or solicitation to buy or sell any security or fund. You should not rely on it when making investment decisions, and it may not be suitable for your circumstances.

With that said, why don't you give us a quick recap of Caledon, how you came together, your investment strategy, and how that led you to the AI buildout?

BM: Will, I'll go first.

Thanks again. A few years ago, James and I were focused on energy stocks in China, and in particular, the renewable space. At some point, we figured, look, you can't make any kind of money in China unless you focus on the bottlenecks. And to do that, you had to map the value chains end to end.

It just so happened that Will had done quite a lot of work on precisely mapping value chains in the renewable space, and that's how we teamed up with Will. Eventually, we pivoted from looking at the energy space in China to the US. The primary driver for that was the realisation that the US was set to reindustrialise, to reshore a lot of manufacturing back to the US. We figured the common denominator in all of that would be energy and energy infrastructure. So when AI then came about, it just proved to be an accelerant to demand for bottlenecks in energy infrastructure. So that's what led us to the AI infrastructure play in the US.

WT: So, Graham, I really have to thank you for putting me on. Years ago, that first one we did on Tesla, I was in Abu Dhabi in a hotel waiting for the Australian visa. Someone who listened got in touch. I never actually met him. He heard that I said I was going to move to Melbourne, and that's where he was based, but for some reason, he was in Malaysia, and he never ended up coming back.

He was working for some big $10 billion fund in Melbourne. They were focused on similar kinds of ideas, interested in global warming, climate change, and electric vehicles. I had one brief conversation with his boss, and he said, "Yeah, I think we're interested in this space. There might be a job. No promises, but there might be a job."

I said, all right, well, when it came time to start blogging again and put feelers out and see what's out there, I thought, all right, I'm going to try to get this guy's attention, since he is not getting back to me. And that's why I decided to write about the Chinese renewable supply chains. I don't think he ever read it. Brian read it instead, and here we are.

So I would just add, if there are any young people in the audience looking to get into this industry, that's the only way I know how to get a job. You've got to put your work out there, and put work out there that you care about, because you're applying for the job that you want. So when Brian got in touch, it wasn't an interview. It was "Let's go." And it's been great working with Brian and James.

I invited you guys on to talk about a memo that you published last month called ‘Hyperscalers Capex is Hitting Physical Limits’.

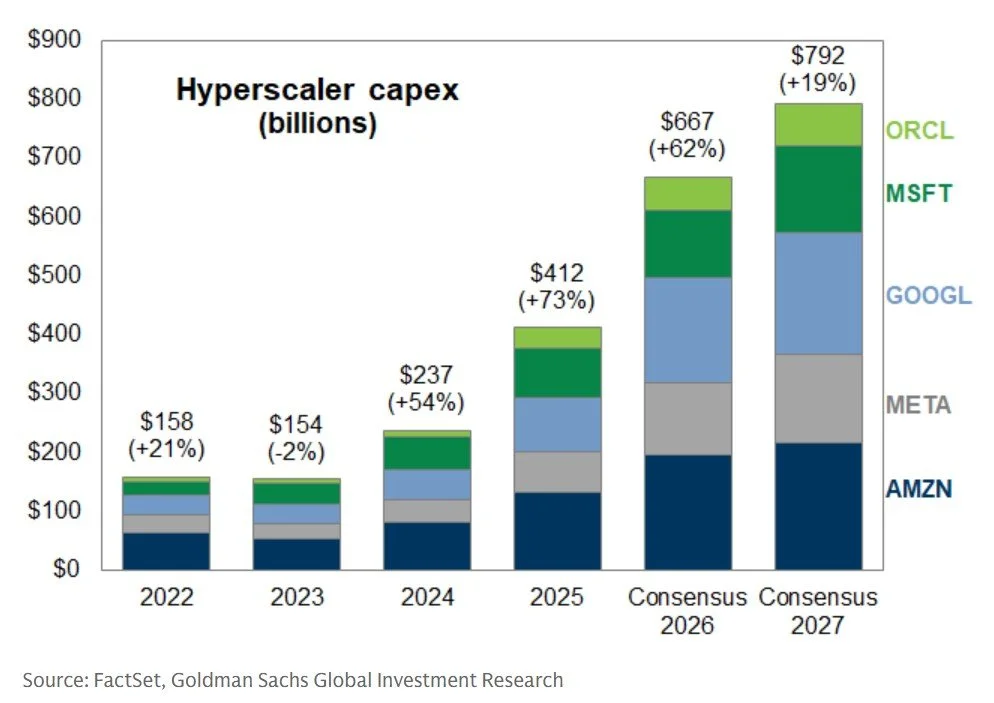

When I spoke with Brian and James back in May 2024 to record a podcast like this, we led with this shocking forecast that big tech capex would hit $195 billion in 2025. In fact, it was a multiple of that. This year alone, Amazon has forecast that it will spend $200 billion, with estimates for the industry as a whole now as high as $700 billion. Guys, what's going on? Why is demand for compute soaring?

Why don't you talk to us about that memo and what your thesis is?

WT: Yep. So AI has a lot of characteristics of a flywheel. We're seeing that the growth in AI infrastructure is being driven by scaling laws that observe that more compute improves the models by a predictable amount, which increases performance, lowers costs, and ultimately increases demand again, which funds more compute. So we're seeing that flywheel spin faster and faster.

Where we are today, you're just starting to see the rollout of agentic AI, and these agentic capabilities are just starting to go mainstream and become available in tools that you already use. The revenue model for the cloud providers is usage-based rather than subscription-based, so scaling up more compute directly translates into generating more revenue.

BM: Maybe just to add to that, the models are getting better, and they're getting more reliable. And as that happens, people are using them more, right, which just creates its own demand. And what's leading to that improvement, as Will points out, is the scaling laws. The more compute you have, the better the models you output, and the more people use them. So yeah, I think the demand is justified, and I think it's going to be sustained for quite a while.

So the thesis is that the primary physical limit to the expansion of AI, particularly in the US at the moment, is access to electricity. AI needs a lot of electricity, and what you find is that demand for AI, which is exponential, is hitting limits around permitting and physical bottlenecks. We are talking about permitting. We are talking about equipment such as transformers. You basically have a grid in the US that didn't expand for two decades, now facing a massive upsurge in demand. So you are constrained by your inability to rapidly expand the US electrical grid.

And specifically, why don't you give us some examples of the physical bottlenecks? You mentioned land and permitting, but I think you've seen some anecdotes in your investee companies that you might share with us today.

BM: Yeah, things like equipment, right? If you are going to generate and transmit electricity, you need transformers, you need turbines, and this is equipment that's produced by a highly specialised, inelastic manufacturing base. So we see it, for instance, in the order books for companies such as Siemens Energy, where the earliest you can get a turbine out of Siemens is probably 2029 or 2030 now.

Part of the reason for that is that the manufacturing of things like transformers has a large manual component. A lot of it is bespoke assembly, so you can't automate the manufacturing of some of this equipment. Outside of China, there's just limited capacity. It requires specialised labour. You've got to train technicians, and that training takes years. So there are physical bottlenecks, there are labour bottlenecks, and it's difficult for manufacturers just to ramp up production overnight.

In terms of where we see it in the portfolio, we see it in the order books. We see it in the order books of construction and engineering firms that we own, such as Comfort Systems. I've already mentioned Siemens Energy, and we also own GE Vernova in the portfolio.

WT: Yeah, just to discuss that point about labour further, if you think about it, the US population is barely growing. The labour force overall is barely growing. And as Brian alluded to earlier, you had two decades where the grid wasn't really growing. So you had people retiring and exiting the industry. The number of electricians in the US today is roughly the same as it was two decades ago.

These EPC contractors, like Comfort Systems, have been consolidating the industry over time, and they're basically the biggest source of that skilled labour. It's very difficult to accelerate growth in those professions because they require years of training, and because they may require people to move their families to Texas.

If we look at the rate of growth of demand, it's much higher than you could reasonably expect labour supply to grow. So these companies have to find other ways to improve labour efficiency, like focusing on modular construction, where they put systems together in a factory, whereas before they would have done it on site. So yeah, that's a big one for us.

And so I'm curious. The price signals are very strong. All the incentives are there for the supply chain to increase capacity. Why hasn't it yet? I'm talking specifically, you explained labour, and obviously, it's hard to give someone 10 years of experience without 10 years of time. But what about, for example, Siemens Energy and GE Vernova?

BM: Yeah, so I think there are several factors, Graham. One is that I think the companies are very cautious about ramping up production, given past instances of having increased capacity only to see demand fall away, right? You'd have to go back 15 or 20 years for the last instance of this, so there's an element of caution.

Then there is the fact that to make things like transformers, a large part of that process is manual, and you simply can't ramp up production fast enough. So I think there are a number of physical factors, and set against that is this huge upsurge in demand that's come primarily from AI data centres and demand for power, but also from reshoring activities, right? So you have this mismatch between supply and demand that's proving very difficult to close.

WT: To put it very simply, if demand has continued to surprise the geniuses on Wall Street, then it's no surprise that it's also surprising everyone else. So if you have to make these investment decisions years in advance, but you are not inside the AI bubble, so to speak, maybe you're a bit cautious about investing too aggressively, right?

Yeah. And I remember last time we talked about a company called Vertiv, which is an industrial company that makes cooling systems for data centres, and its financials, frankly, until very recently didn't look that great. So I imagine management was very reluctant to bet the farm on something that had yet to be proven. Do you think?

WT: I mean, it may also just be the case that everyone is investing as much as they possibly can, but it's still not enough. Brian, do you have thoughts on that?

BM: Yeah.

BM: Look, I agree with that. I think visibility into demand for a lot of these companies now stretches to 2030, and they're doing as much as they can. I think they're just constrained by the supply chains. You simply can't ramp up fast enough, even if you want to. I think that's the situation we are faced with at this point.

Okay. So maybe a different way to tackle this question would be to ask how sustainable you think this investment cycle is. And I guess, at the core of it, do you think that computing has moved into a new, asset-intensive era, where it simply costs more to deliver what we expect from our software? I'll leave it to you guys.

WT: Yeah, go ahead.

BM: Yeah, go for it.

WT: Okay. I would just point out that we have not yet seen a cycle in data centre GPU spend, which Nvidia has broken out for more than a decade now. They've never had a down year.

Past cycles in consumer GPUs and legacy data centre architecture were driven by dynamics that we don't really see in the industry today, at this point in the development of AI data centres. Nvidia is driving progress now that is much faster than Moore's Law, with their chip architecture improvements, extreme co-design with suppliers, and networking that enables larger and larger GPU clusters to act as one computer.

And instead of improving 2x every two years, like the industry was used to, it's more like 5x to 10x per year. So this just ensures very strong demand for the latest chips.

BM: Whatever chips can be produced.

So just in terms of whether we're entering a new paradigm in terms of the infrastructure required to produce software, yeah, I think so as well. And you can see that in the capex expansion of someone like TSMC, right? They're also very conservative in expanding capex, but they're doing so quite aggressively now, which just tells you that the demand they're seeing down the line for infrastructure, or hardware, is pretty solid.

So I think the investment in compute is going to be sustained for quite a while from where we are at the moment. I would even dare say we're in the early innings of the expansion in compute.

WT: Even though we're focused on the energy side, we do spend a lot of time looking at the semiconductor supply chain. Brian mentioned TSMC, but if you're looking at things like memory, supply is being reserved and contracted two or three years out. Yes, we're expecting this kind of growth to continue for the foreseeable future.

I think we're getting to this earlier than I thought we would, but I understand the arguments that you're making. Accelerated computing is simply much more asset-intensive at the moment. The revenue models are there, and the incentives are there to buy the latest generation of chips, just because the performance justifies it.

But what do you guys say to the people who argue that we're in a bubble? What are some of the strongest arguments you've heard that make you a little bit cautious, and how do you think about those, or maybe even refute them?

BM: Yeah, so I think there are several big arguments around this, right? The first argument talks about circular funding, sort of money from Nvidia being invested, Nvidia investing in its customers, who then buy its chips.

But if you take a step back, a lot of the neoclouds, for instance, are startups. They don't have money. Someone has to invest, and Nvidia is cash-flush. They're oiling the system, as it were.

From an Nvidia perspective, their biggest competitor is not AMD anyway. Their biggest competitor is Alphabet, right? Because they've got the full stack. They've got the TPU, they've got the model, they've got the cloud. If you, as Nvidia, do not oil this ecosystem and broaden it out, this will become Google's race to win. So there's an incentive for Nvidia to have a diversified market out there.

The other big argument that's being put out is that these models are commoditised, and it's a race to the bottom. Related to that, I suppose, is this argument that you're getting cheap, open-source models out of China.

Up until recently, one could have bought the argument that a model with 80 per cent of the capability of the leading-edge model was good enough. But the recent advances that we've seen, for instance, in agentic AI, mean that demand for leading, cutting-edge model capabilities is going to be quite robust, actually.

When you look at the incentives to invest, when you look at the revenue models, when you look at demand for AI, I think it's too soon to be saying that we are in a bubble.

WT: There's another bear thesis for Nvidia, which is seen as the poster child for the AI boom, or bubble. That revolves around the possibility that the depreciation schedules for the GPUs are being stretched out too long. So the hyperscalers that are buying them are recording their useful lives at five years now.

But if Nvidia is coming out with such great chips every year, it should accelerate the obsolescence of these older GPUs. Maybe the actual life is only three years. But what we're actually seeing is that these new chips are enabling such rapid advancements in the models themselves that you can then serve the model with older chips and create much more valuable tokens with the older chips.

So you can look at the installed base out there and see that seven-year-old chips are fully utilised. Three-year-old chips, now that the initial contract is coming off, are being renegotiated for another three years at an even higher price because the value of the models has progressed so quickly.

So yeah, it's an interesting cycle, where the media tends to amplify whatever the bear argument is at the time. Then the internet exchanges the relevant data points that totally disprove it, but that doesn't seem to get disseminated as well as the initial shock.

And we're recording this in March 2026, some three months after the launch of Claude Code, and all the statistics I've seen are just incredible in terms of how exponentially this product is growing and how compute-demanding it is. The simplest explanation I heard was that we're no longer serving humans, who have limited hours of work, limited attention, and limited capacity for work. We're now serving an almost infinite number of agents, who can work for as much time as you can get the GPUs and power them.

WT: Yeah, I mean, I think it will require a lot of people to rethink how they work. Every quarter, companies report, and we do roughly the same tasks around listening to conference calls, summarising those results, and looking around for new ideas. But every quarter, I've noticed that the models just get better, and it influences the way I work.

I think it's now becoming important to take a step back, think about what's keeping you busy, and think like a builder. Use these new tools to enhance whatever your process is. There's a lot of potential there.

What about another argument, which we haven't gotten to yet, which is that the companies paying for this investment are reaching the limits of what they can afford, arguably? So the hyperscalers, Alphabet, Amazon, Microsoft, and Meta have seen substantial reductions in their free cash flow as they've chosen to invest to build up their compute capacity.

And most of those companies have now even begun issuing debt in amounts that I think we've never seen from them before. How much more can this keep growing if the major customers in this investment cycle, the ones doing the investment, simply don't have the capital to keep ploughing into it?

BM: So I think eventually pension funds or retirement funds are going to fund the infrastructure buildout, right, in the same way that they fund commercial buildings or other aspects of infrastructure. They've got the cheapest sources of capital, and the sort of revenue streams or income streams from data centres are a natural fit for the liabilities of pension funds.

So I think that eventually the hyperscalers will simply rent this sort of infrastructure from institutions. And you've already seen that pivot, right? In the US, you've seen institutions such as Brookfield refocus their efforts on building out AI, explicitly to build that AI infrastructure. So I'm not too worried about the buildout becoming too expensive for the hyperscalers.

WT: I would add that as the hyperscalers are increasing their capex, it may look like free cash flow is declining, but operating cash flow is going up, and a lot of that capex is investment in future growth.

When you're looking at the hyperscalers, it's kind of hard to break out what exactly the economics of their AI data centres are, because so much of the compute is used for internal purposes. So you may be more likely to see the impact of that in their income statement. For instance, their operating margins are going up. They're doing more with less.

And part of this investment is also in the shells, so the data centre shells themselves. These will last a lot longer than the chips. Eventually, when the GPUs come to the end of life, they'll be able to swap them out for the latest generation. I think the actual return picture for the hyperscalers is better than what meets the eye.

Yeah, it's an interesting one. We're swapping opex for capex now, aren't we?

WT: Right?

BM: Pretty much, yeah.

But let's go back to the bottlenecks, which is what you guys spend so much of your time identifying and investing behind. You've mentioned a few, like turbines, cooling systems, and labour.

What are the workarounds here? Because the price signals are so high and the demand is so strong. The incentive is there to fix this, right?

BM: Look, I think you've seen, for instance, the hyperscalers looking for alternatives, right? You've seen them, because they can't wait, go behind the meter as an example. You've seen them trying to use alternative fuel sources. An example is fuel cells. You've seen them restarting shuttered nuclear plants that are already connected to the grid, or actually moving to geographies such as Texas with stranded power or a favourable regulatory environment, right? Those are some of the workarounds that we've seen the hyperscalers pursue to try to get access to energy.

And which bottlenecks do you think will get resolved most quickly, and which ones are maybe still being underestimated?

BM: I think the bottlenecks around physical equipment, such as transformers, will persist for quite a while. Part of this is that people underestimate the integration of renewables into the grid system. Renewables, because of their lower capacity factors, require a lot more transformers, for instance, per megawatt of energy capacity compared to traditional thermal plants, due to their distributed nature.

So I think demand for things like transformers will persist. The other thing I think people underestimate is the ageing infrastructure you have in the US grid. If you look at the existing transformer fleet in the US, as an example, over 50 per cent is 40-plus years old. Not only are you trying to meet new demand, you also have to replace the existing infrastructure. So I think those are tailwinds. That just means demand for things like transformers will be more sustained than most people think.

When it comes to turbines, the fact that you can convert turbines that could have been used for jet engines might mean that maybe we resolve turbines before we resolve demand for transformers. But yeah, I think that's on the margins at this point.

WT: I think it's worth pointing out that this isn't really like a material bottleneck where you open a new mine. To solve it, maybe it takes seven years to open the mine, but then it's open, and now the bottleneck is solved, and prices go back to normal.

Because you have this structural exponential growth in demand, even if these suppliers can catch up to that, I think we're still settling at higher prices, better margins, and still very attractive growth.

I think what the market is not giving any of these companies credit for is what happens after the next two years. If it just so happens that the energy bottleneck is solved in two years, things don't just go back to normal for these companies then. They still have a long runway of strong double-digit growth ahead of them.

Can you elaborate on that, and maybe explain why they don't go back to normal or why there wouldn't be a cyclical downturn?

WT: For instance, if you think about the labour bottleneck, maybe the way to solve it is to increase the wages of electricians. Dylan Patel of SemiAnalysis put out a podcast earlier this week. He was arguing that the ultimate bottleneck is somewhere in the semiconductor supply chain, and energy is, you know, a simpler supply chain, so it can more easily be solved.

He talked about the labour bottleneck, but he conceded that maybe wages have to go up another 2x or 3x. But it's not an unsolvable problem. For a company like Comfort Systems that basically owns that labour, the market is not pricing in a 2x to 3x increase in their pricing power anytime soon. And wages are very sticky. So once they go up, they're not going back down. And then there's still a lot of work left to be done. We still have to keep building data centres.

BM: So the other point to make, I guess, around the question of cyclicality is that demand for EPC companies, for instance, or energy in general, is much broader than AI. I mean, we focus on AI because of the amount of capital involved. But you still have reshoring. You still have the electrification of transportation, as an example. The demand driving what's going on at the moment is a lot broader than just AI, and that gives us confidence that demand will be sustained.

And the funny thing is, it's all those seemingly peripheral factors that attracted us to this theme in the first instance. AI was just a cherry on top. The irony is that AI is now the main demand driver, but it's not the only one. I think that's a very important point to make.

WT: Before the AI boom, the world was talking about the energy transition and decarbonising the grid.

Which was going to require a lot of work anyway. Okay. Well, I have to ask the obvious question then. What do you think could derail the thesis?

BM: Yeah, that's a tough one. I think if you came up with a new technology that still advanced AI but didn't require as much energy, that would be quite negative for the thesis. But as we point out, demand for energy infrastructure goes beyond AI.

Another thing is that obviously a lot of what we are invested in is linked, in one way or another, to the rollout of data centres. So if you start getting a lot of on-device AI, that in theory could reduce the demand for data centres. But if you look at the latest advances in AI, and the massive requirements for memory that is driving, it's unlikely that the majority of AI will take place on device.

So I think there are potential negatives to the thesis, but each time you think it through, there's a counter to that negative.

WT: This makes me think of exactly the same kinds of conversations we were having a year ago when everybody started talking about DeepSeek. It was on our radar maybe a month or so before everyone decided it was a huge negative for AI demand, or infrastructure demand.

When we saw it and thought it through, we said, well, any drastic improvement in the efficiency of these models is just going to result in more aggregate demand. And to date, that's what we've seen. It's Jevons paradox, which was originally the observation that the better we got at drilling oil and getting cheaper oil, the total demand continued to rise. We're not solving our supply problems by making the fuel cheaper.

Yeah, and I'm glad you brought up DeepSeek because I was going to ask about the same thing. The human mind and its ingenuity have no limits. And now those limits are even further out than we thought, now that we have AI to help us! So the possibility of innovation increasing efficiency suddenly, and in step changes, seems to be quite real.

WT: When I was thinking through this question, it's kind of natural to say, well, if something comes out and we don't need to use as much energy. But no, we already know that we're going to need more energy.

Well, what if scaling laws slow down, like Moore's Law slowed down? The slowdown in Moore's Law also coincided with the rise of the GPU and accelerated computing, which caused data centre energy demand to explode again. So I think betting against scaling laws today would be like betting against Moore's Law 50 years ago.

I don't think we're really anywhere close to the end of that. But if we were at the end of that, then it's likely that there would be something else growing even faster, maybe quantum computing, that was even more energy efficient and becoming more efficient at an even faster rate, which would just require us to go out and use even more energy.

Maybe by that point in time, we're launching solar-powered data centres into space because we're constrained by what we can do on Earth.

My two cents is that we're really only just getting started. There will be companies born in the next five or 10 years that are AI-native, that have designed themselves around using agents and the tools that we have available today, and that will become increasingly competitive and demand a response from incumbents. Together, they will just continue to drive demand for tokens higher and higher.

WT: I like to think that Caledon is one of those.

Hey, there you go!

WT: We're a ragtag team finding our way around, using these expensive tools that the legacy funds would be using. We're covering more ground than I could have imagined possible five years ago, at a fraction of the cost of a Bloomberg terminal.

Yeah, and probably a fraction of the time.

BM: Yeah.

Actually, I take that back. It feels like I'm working even harder now, but I'm accomplishing a non-linear multiple of what the time would suggest. It just makes me want to work harder and do more. It's kind of weird.

BM: Jevons paradox. That's the economic imperative, right, of using AI. The incentives are just immense, and we end up using a lot more of it.

All right. So, where do you guys avoid investing? Where do you not look?

BM: You know, I think...

WT: Think.

BM: Yes. I think anything that's commoditised or very competitive. Given my prior experience, we avoid a lot of stuff in China, right?

WT: I think China is very, very competitive. So we're avoiding China. We also generally tend to avoid commodities themselves. There are just too many moving parts, and they're very difficult to predict with any sort of accuracy. So yeah, I'll highlight those two. It's funny that was the first one on my list too.

When we met, that was our primary point of focus, the Chinese supply chains in solar, batteries, and EVs. I think in general, having limited spots in the portfolio and an expanding universe of good ideas kind of forces us to rule out otherwise good companies exposed to the same kind of demand tailwinds that are either just not growing fast enough, or that we see as not having as much of a moat or recurring revenue.

Well, let me put you on the spot then. Relatedly, why aren't you invested more in the hyperscalers themselves?

BM: Yeah, so I think it goes back to an earlier point you made, Graham, about the capital intensity of software and whether that's changed from what it was in the past. I think there is an element of that, right, that the ROIs of the hyperscalers, at least in the near to medium term, will be lower than they have been in the past, just because of the scale of investment they have to make upfront and the delayed revenue they will get.

And when you look at the kind of spending, the amounts involved from the hyperscalers, and you look at it from the other side, at who they're spending it on, and the relatively small size of the companies that they're spending it on, the risk-adjusted returns just look a lot more attractive on the other side of that equation.

So it's not making an absolute judgment on the hyperscalers themselves. It's just that we see more attractive options elsewhere.

WT: If returns on AI infrastructure investment turn out to be 15 to 20 per cent a year, well, that's great, and that's what the hyperscalers should be doing with their cash. But a lot of that value is accruing to Nvidia and companies in that supply chain. Out of the Magnificent Seven, Nvidia really stands out as the platform that everybody else is relying on. And it is that familiar hardware-software model that's so attractive.

Well, I'm glad you mentioned that, because I did want to ask: why don't you own more semis?

WT: Well, we are an energy fund, so we look across the semiconductor supply chain. But there are obviously areas where we don't think it would be appropriate to invest in this fund, for example, memory. But we do map those out, and there are, for instance, a lot of component suppliers in the AI server supply chain listed in Taiwan that look very interesting. So they're high up on the watch list, especially the ones that are more related to energy saving.

BM: I think, just from a risk point of view, we think it's prudent actually to go for companies that have a broad market distribution, that serve many sectors. Hence, for instance, we like EPCs, as an example, right? They're sector-agnostic. So yeah, semis sometimes feel maybe too specialised, at times, with limited end markets.

WT: We also don't know what exactly could be in the pipeline in terms of energy demand. If things like autonomous EVs or humanoid robots catch on, there's still quite a lot of demand to come from the general electrification of transportation and industry.

Which is a good point to pivot a little bit. Since you guys have spent so much time looking at China, can you give us a quick compare and contrast between China's journey of electrification and AI and America's?

BM: So I think the biggest difference between the US and China is the involvement of the central government, right? If you look at electrification as an example, a lot of energy assets in China are in the west of the country, while energy demand is in the east. The most efficient way of getting energy from the west to the east is ultra-high-voltage cables.

China was able to roll that out at scale and pretty quickly, which speaks to the government's ability to... maybe "get things done" is not the right phrase, but central planning. If you look at the US, for something similar, the permitting around trying to get transmission lines across different states, and the whole not-in-my-backyard movement that you need to overcome, just means things take a lot longer. Infrastructure buildout takes a lot longer in the US for things like that, compared to China.

Will, you might want to contrast certain aspects around AI and electrification.

WT: So in China also, if you go back 20 years to when they were subsidising industries such as solar panel manufacturing, battery manufacturing, the EV supply chain, electrification for them was already existential because they have to import so much of their fuel.

You can see from the current geopolitical instability we're going through now that it would have a much larger impact on China if they weren't already so far ahead of the game in terms of electrifying transportation. I think last year, for the first time, oil demand fell. That's why they got such a head start there.

And then in terms of AI, the latest and greatest chips aren't available to them. So if they're going to catch up, it's going to be on far less efficient chips from domestic producers like Huawei. And it's going to require a lot more energy than it does in the US. So even though we talk about how big aggregate demand is growing in the US, when you look at the energy efficiency of a single token, it's far better in the US than it is in China, because the chips are better.

BM: It's interesting because when you take a step back from an investment standpoint, China has excess energy but is behind the US in cutting-edge chips.

When you look at the US, in the design and availability of cutting-edge chips, the US is ahead of China. What it has a shortage of is energy. In a sense, that influenced our strategy of targeting those energy bottlenecks in the US, as opposed to China, where we had started.

Yeah. I'm surprised you guys didn't mention the possibility of an energy shock as a risk to this.

WT: I guess we kind of talked about this, that if oil became an energy bottleneck, then these issues get resolved pretty quickly. I mean, Saudi Arabia, the UAE, yes, all the conflict is happening there, around the Strait of Hormuz, but they're opening a pipeline going the other direction. The US starts drilling again. These things kind of resolve themselves too quickly to be interesting from an investment standpoint, I think.

And the long-term trend is toward less oil anyway, and oil shocks only accelerate demand for solar, batteries, and alternatives.

BM: So speaking of that, one of the things we've taken away from having looked at solar and batteries in China is the steady decline in unit costs. You can see that being very applicable in the US, right? Take something like Bloom Energy, as an example. If you looked at it two or three years ago, it wasn't cost-competitive. But if you look at the trajectory they've been on over time, and if you recall what's happened in China around solar prices, for example, you can see the direction of travel, right?

Particularly with demand for the product, so you've got strong demand for the product and unit costs coming down consistently. You have more confidence in making that call when you've seen similar things elsewhere, right? So you can confidently say, maybe this time will be different.

Versus someone who's looked at Bloom Energy since 2018, if they look at it in a silo, right, and they've looked at it since the IPO or somewhere around there, they just won't believe you if you tell them this time is different. They just won't believe it.

Yeah. I don't think a value investor would put any weight on that possibility. We want cash flow now!

WT: There's something that wasn't really widely available to the investing public, to retail investors, maybe. Before, you needed a CapIQ subscription or something like that. Now you've got these cheaper tools. Maybe you have to pay 50 bucks a month or something, which is still a barrier.

But you can see how analyst consensus estimates have evolved over time. When you find a stock in the AI supply chain that's gone up 10x in the last year, I guarantee you that the earnings estimates two or three years out have also gone up 10x.

If you're just looking at trailing numbers, it can feel like a massive valuation expansion, a multiple expansion. But actually, everyone is looking at what the numbers are two years out and following that. And it's like algorithms following that, or, you know, most investing is passive investing, right?

So it just doesn't feel like the market has gotten out over its skis and started to give these companies credit for another 100 per cent increase in earnings estimates, or for continuing this growth into the next decade. It's more like, yeah, it's just that two-year-out estimate.

But that's because these historically have been cyclical industries, right, because of their high fixed-cost base and what has historically been lumpy and cyclical demand, right? So maybe no one wants to give credence to that. And certainly there's scepticism about paying up for it.

WT: Yeah. You just never had a massive, exponential growth driver, a demand driver.

Yeah. So it's the demand that's changed, I think. Yeah. It's incredible. That's a very good articulation.

As we come to the end of this podcast, and we've covered a lot of ground across a lot of different themes, it's been super interesting for me. I want to touch on one last question, which is to ask you guys about how you perform your research. Because between the three of you, I think you're some of the best idea hunters that I've ever come across, and I'm constantly in awe of the companies that you find and the names that I see in your portfolio.

So you've kind of alluded to this before, but maybe now is a good chance to focus on it a little bit. Tell us about your research process and how you go about finding the ideas that make it into the Caledon fund.

BM: Yeah, so the research process is basically premised on mapping out value chains end to end, and just understanding where the bottlenecks might be. It's something we did just to get to grips with the Chinese market, given how competitive it is and how rare it is to find companies that make sustainably high margins.

If you then apply that approach to the US, which we eventually did, it's fascinating the sort of companies that you unearth, right? Because you keep an open mind about what could be in that value chain.

And coming back to that whole idea of having started by looking at China, and then ending up applying that process in the US, I think what I've found is, and I'm paraphrasing here, the best way to understand a sector or country is actually to study a different one. Because that gives you original insights, since you are applying ideas that you've seen in one part of the world to another part of the world that may be catching up, for instance, in industrialising or reshoring in the US.

So I think it's the perspective that we gained from looking at China that's proving to be quite useful in its application, particularly in the US.

WT: Yeah. I feel like working with Brian and James has really supercharged my focus. Prior to meeting Brian and James, my investment experience was spread across a bunch of different geographies and was very generalised. So I was looking at semiconductor stocks, but I was also looking at the largest wig seller in Japan, or, you know, things that don't really add up to much cumulative knowledge of an industry, right?

But having that focus on these supply chains has been a great way to structure the data that we have in our brains. James or Brian will remember a company they looked at 10 years ago, or I remember some company that fits in. And it's much easier to get up to speed very quickly, understand how it fits in, and whether there's an opportunity there or what the implications are for something that we already own.

Yeah, I think it's been a very rewarding way to work.

That's so cool. Focus is a superpower. I agree with you.

Guys, let's wrap it up there. It's been a pleasure to talk with you again. If anyone wants to follow up or get in touch with you, what's the best way to reach out?

BM: Well, I think the best way to reach out is either via email, [email protected] or [email protected], or via our website, www.caledonip.com.

And if you enjoyed this conversation and would like to learn more about Long River, or to hear others just like it, you can visit my site at www.longriverinv.com.

Guys, it's been a pleasure. Thanks again!

WT: Thanks, Graham.

BM: Thank you, Graham.